TL;DR

Microsoft Fabric has become the fastest-growing product in Microsoft’s history in just 21 months. Its unification of previously fragmented tools, built-in real-time intelligence, AI-driven data agents, and full CI/CD support make it a turning point for users. Going forward, Fabric won’t be just another platform to support — it will be the foundation of enterprise data and AI. For IT Ops, that means shifting from fragmented support to proactive enablement, governance, and automation.

What problem is Fabric solving?

Historically, Microsoft’s data stack was a patchwork: Synapse for warehousing, Data Factory for pipelines, Stream Analytics for real-time, Power BI for reporting. Each had its own UI, governance quirks, and operational playbook.

Fabric consolidates that fragmentation into a single SaaS platform. Every workload – engineering, science, real-time, BI – runs on OneLake as its common storage layer. One data copy, one security model, one operational pillar. That means less time spent reconciling permissions across silos, fewer brittle integrations, and a clearer line of sight on performance.

This is the first part of our series on Microsoft Fabric. Read more about

- When to start using Fabric

- How to set up your first Fabric workplace, and

- The basics of Fabric licensing

How does real-time intelligence change the game?

Data platforms used to mean waiting until the next day for updates. Fabric resets these expectations. With Real-Time Intelligence built in, organisations can analyse telemetry, IoT, and application events as they happen — and trigger actions automatically.

For IT Ops, this changes monitoring from reactive to proactive. Anomaly detection and automated alerts are no longer bespoke projects; they’re native capabilities. The platform itself becomes part of the operations toolkit, surfacing issues and even suggesting resolutions before they escalate.

Is Fabric’s AI just Copilot?

So far, much of the AI conversation has centred on Copilot. But Fabric is pushing further, introducing data agents: role-aware, business-user-friendly assistants that can “chat with your data”.

This isn’t to replace analysts — the goal is to reduce bottlenecks. Business teams can query data directly, run sentiment analysis on CRM records, or detect churn patterns without submitting a ticket or waiting for a report build. IT Ops teams, in turn, can focus on platform health, governance, and performance, confident that access and security policies are enforced consistently.

How does Fabric fit into DevOps (DataOps) practices?

Fabric is closing the gap between data and software engineering. Every item, from a pipeline to a Power BI dataset, now supports CI/CD. GitHub integration is tightening, the Fabric CLI has gone open source, and the community is expanding rapidly.

For developers, this means fewer manual deployments, clearer audit trails, and the ability to fold Fabric artefacts into existing DevOps pipelines. Troubleshooting is also improving, with capacity metrics being redesigned for easier debugging and monitoring APIs opening new automation opportunities.

Why does this matter for IT operations going forward?

Fabric’s rapid progression is not just a vendor milestone. It signals a market demand for unified foundations, real-time responsiveness, AI-ready governance, and operational maturity.

As Fabric becomes the default data platform in the Microsoft ecosystem, IT operations will decide whether its promise translates into reliable, compliant, and scalable enterprise systems. From governance models to real-time monitoring to embedding Fabric into CI/CD, IT Ops will be the enabler.

What’s next for IT Ops with Fabric?

Fabric’s trajectory suggests it is on its way to becoming the operational backbone for AI-driven enterprises. For IT Ops leaders, the question is no longer if Fabric will be central, but how quickly they prepare their teams and processes to run it at scale.

Those who act early will position IT operations not as a cost centre, but as a strategic driver of enterprise intelligence.

Ready to explore how Microsoft Fabric can support your AI and data strategy? Contact our team to discuss how we can help you design, govern, and operate Fabric effectively in your organisation.

TL;DR:

Ops teams play a critical role in enabling citizen developers to build custom AI agents in Microsoft Copilot Studio. With natural language and low-code tools, non-technical staff can design workflows by simply describing their intent. The approach works well for structured processes but is less effective for complex file handling and multilingual prompts. To avoid compliance risks, high costs, or hallucinations, Ops teams must enforce guardrails with Data Loss Prevention, Purview, and Agent Runtime Protection Status. Adoption metrics, security posture, and clear business use cases signal when an agent is ready to scale. Real value comes from reduced manual workload and faster processes.

From chatbots to AI agents: lowering the barrier to entry

Copilot Studio has come a long way from its Power Virtual Agents origins. What was once a no-code chatbot builder has become a true agent platform that combines natural language authoring with low-code automation.

This shift means that “citizen developers” — business users, non-technical staff in finance, HR, or operations — can now design their own AI agents by simply describing what they want in plain English. For example:

“When an invoice arrives, extract data and send it for approval.”

Copilot Studio will automatically generate a draft workflow with those steps. Add in some guidance around knowledge sources, tools, and tone of voice, and the result is a working agent that can be published in Teams, Microsoft 365 Copilot, or even external portals.

This lowers the barrier to entry, but it doesn’t remove the need for structure, governance, and training. That’s where the Ops team comes in.

Good to know: Where natural language works — and where it doesn’t

The AI-assisted authoring in Copilot Studio is powerful, but it has limits. Citizen developers should know that:

- Strengths: Natural language works well for structured workflows and simple triggers (“if an RFP arrives, collect key fields and notify procurement”).

- Weaknesses: File handling is still a challenge. Unlike M365 Copilot or ChatGPT, Copilot Studio agents are not yet great at tasks like “process this document and upload it to SharePoint” purely from natural language prompts. These scenarios require additional configuration.

- Localisation gaps: Native Hungarian (and many other languages) aren’t yet supported, so prompts must be translated to English first — with the risk of losing substance.

For Ops teams, this means setting realistic expectations with business users and stepping in when agents need to move from prototype to production.

Setting guardrails: governance, security, and compliance

Citizen development without governance can quickly become a compliance risk — or result in unexpected costs. Imagine a team lowering content moderation to “low” and publishing an agent that hallucinates, uses unauthorised web search, or leaks sensitive data.

To prevent these scenarios, Ops teams should establish clear guardrails:

- Train citizen developers first on licenses, costs, knowledge sources, and prompting.

- Apply DLP policies — Power Platform Data Loss Prevention rules can extend into Copilot Studio, preventing risky connectors or external file sharing.

- Leverage Microsoft Purview to enforce compliance and detect policy violations across agents.

- Check Monitor Protection Status — each Copilot Studio agent displays a real-time security posture, flagging issues before they escalate.

- Define a governance model — centralised (Ops reviews every agent before publishing) or federated (teams experiment, Ops provides oversight). For organisations new to citizen development, centralised control is often the safer path.

The goal is to strike the right balance: empower citizen developers, but ensure guardrails keep development secure and compliant.

Scaling and adoption: knowing when to step in

Citizen-built agents can add value quickly, but Ops needs to know when to take over. Some key signs:

- Adoption metrics — Copilot Studio provides data on engagement, answer quality, and effectiveness scores. If an agent is gaining traction, it may need Ops support to harden and scale.

- Security posture — monitoring Protection Status helps Ops see when an agent needs adjustments before wider rollout.

- Clear use case fit — when a team builds an agent around a defined business process (invoice approval, employee onboarding), it’s a good candidate to formalise and extend.

Ops teams should also set up lifecycle management and ownership frameworks to avoid “shadow agents” that nobody maintains.

How to measure the real value of custom agents

Metrics like adoption, effectiveness, and engagement tell part of the story. But the real measure of success is whether agents help reduce manual workload, accelerate processes, and cut costs.

For example:

- Does the HR onboarding agent save time for hiring managers?

- Does the finance approval agent speed up invoice processing and payment approvals?

- Is there a reduction in number of tickets that Ops teams have to handle because business users solve their needs with agents?

These qualitative outcomes matter more than raw usage stats — and Ops is best positioned to track them.

Takeaways for Ops teams

Enabling citizen developers in Copilot Studio is all about finding the right balance. You want to give business users the freedom to experiment with natural language tools and ready-made templates. At the same time, it helps to teach them the basics — how to prompt effectively, what knowledge sources to use, and even what the licensing costs look like.

Of course, freedom comes with responsibility. That’s why Ops needs to set guardrails through DLP, Purview, and centralised reviews. And as agents start getting real traction, it’s important to keep an eye on adoption metrics so you know when a quick experiment is ready to be treated as an enterprise-grade solution.

When you get this balance right, it becomes a scalable and secure way for the business to automate processes — with Ops guiding the journey rather than standing in the way.

Ready to see how Copilot Studio could empower your teams? Get in touch with our experts and discuss your use case!

TL;DR:

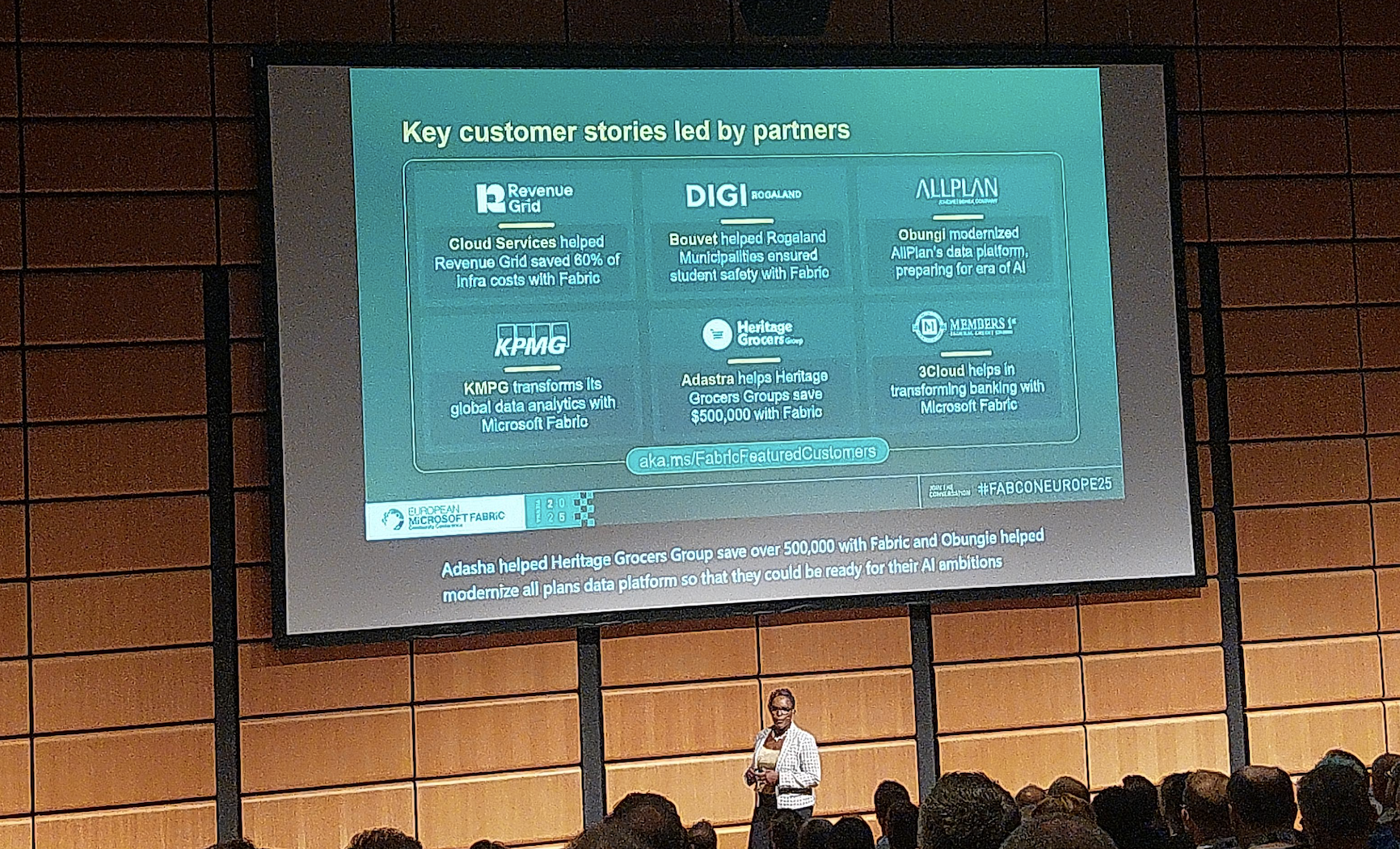

Scaling AI isn’t just about the model — it’s about the data foundation. Without a unified, modern platform, most AI projects stay stuck in pilot mode. At the recent Microsoft Fabric Conference, we saw firsthand how Fabric delivers that missing foundation: one copy of data, integrated analytics, built-in governance, and AI-ready architecture. The results are faster scaling, higher accuracy, and greater ROI from AI investments.

The illusion of quick wins with AI

Last week, our team attended the Microsoft Fabric Conference in Vienna, where one theme came through loud and clear: AI without a modern data platform doesn’t scale.

It’s a reality we’ve seen with many organisations. AI pilots often succeed in a controlled environment — a chatbot here, a forecasting model there — but when teams try to scale across the enterprise, projects stall.

The reason is always the same: data. Fragmented, inconsistent, and inaccessible data prevents AI from becoming a true enterprise capability. What looks like a quick win in one corner of the business doesn’t translate when the underlying data foundation can’t keep up.

The core problem: data that doesn’t scale

For AI initiatives to deliver value at scale, organisations typically need three things in their data:

- Volume and variety — broad, representative datasets that capture the reality of the business.

- Quality and governance — data that is accurate, consistent, and compliant with policies and regulations.

- Accessibility and performance — the ability to access and process information quickly and reliably for training and inference.

Yet in many enterprises, data still lives in silos across ERP, CRM, IoT, and third-party applications. Legacy infrastructure often can’t handle the processing power that AI requires, while duplicated and inconsistent data creates trust issues that undermine confidence in outputs.

On top of that, slow data pipelines delay projects and drive up costs. These challenges explain why so many AI initiatives never move beyond the pilot phase.

The solution: a modern, unified data platform

A modern data platform doesn’t just centralise storage — it makes data usable at scale.That means unifying enterprise and external data within a single foundation, ensuring governance so information is clean, secure, compliant, and reliable by default.

It must also deliver the performance required to process large volumes of data in real time for demanding AI workloads, while providing the flexibility to work with both structured sources like ERP records and unstructured content such as text, images, or video.

This is exactly the gap Microsoft Fabric is built to close.

Enter Microsoft Fabric: AI’s missing foundation

At the conference, we heard repeatedly how Fabric is turning AI projects from disconnected experiments into enterprise-scale systems.

Fabric isn’t a single tool. It’s a complete data platform designed for the AI era — consolidating capabilities that used to require multiple systems:

- OneLake, one copy of data — no duplication, no confusion; store once, use everywhere.

- Integrated analytics — data engineering, science, real-time analytics, and BI in one platform.

- Built-in governance — security, compliance, and lineage embedded by design.

- AI-ready architecture — seamless with Azure ML, Copilot, and Power Platform.

- Dataverse + Fabric — every Dataverse project is now effectively a Fabric project, making operational data part of the analytics foundation.

- Improved developer experience — new features reduce friction and make it easier to turn raw data into usable insights.

- Agentic demos — highlight why structured data preparation is more critical than flashy models.

- Fabric Graph visualization — reveals relationships across the data landscape and unlocks hidden patterns.

The business impact

The message is clear: Fabric isn’t just a data tool — it’s the foundation that finally makes AI scale.

Early adopters of Fabric are already seeing results:

- 70% faster data prep for AI and analytics teams.

- Global copilots launched in months, not years.

- Lower infrastructure costs thanks to one copy of data instead of endless duplication.

Make your AI scalable, reliable, and impactful with Microsoft Fabric

AI without a modern data platform is fragile. With Microsoft Fabric, enterprises move from isolated pilots to enterprise-wide transformation.

Fabric doesn’t just modernise data. It makes AI scalable, reliable, and impactful.

Don’t let fragile data foundations hold back your AI strategy. Talk to our experts to explore how Fabric can unlock AI at scale for your organisation.

TL;DR:

Public AI tools like ChatGPT create security and compliance risks because you can’t control where sensitive data goes. Copilot Studio solves this by running inside your Microsoft 365 tenant, inheriting existing permissions, enforcing tenant-level data boundaries, and aligning with Microsoft’s Responsible AI standards and residency protections. With proper governance — from data cleanup and Data Loss Prevention to connector control and clear usage policies — you can enable safe, compliant AI adoption that builds trust and empowers employees without risking data leaks or reputational damage.

“How do we give employees access to AI tools without sensitive data leaking to public models?”

It’s the first question IT operations and compliance leaders need to consider when AI adoption comes up — and for good reason. While tools like ChatGPT are powerful, they aren’t built with enterprise governance in mind. As a result, AI usage remains uncontrolled, potentially exposing sensitive information.

The conversation is no longer about if employees will use AI, but how to allow it without risking data loss, non-compliance, or reputational damage.

In this post, we explore how you can deploy Copilot Studio securely to give teams the AI capabilities they want while keeping data firmly within organisational boundaries.

The governance challenge

Most free, public AI tools have one major drawback: you can’t control what happens to the data you give them. Paste in a contract or an HR document, and it could be ingested into a public model with no way to retract it.

For IT leaders, that’s an impossible position:

- Block access entirely and watch shadow AI usage grow.

- Allow access and risk sensitive data leaving your control.

What you need is a way to enable AI while ensuring all information stays securely within the organisation’s boundaries.

How Copilot Studio handles security and data

Copilot Studio is designed to work with — not around — your existing Microsoft 365 security model. That means:

- Inherited permissions: A Copilot agent can only retrieve SharePoint or OneDrive files the user already has access to. If permissions are denied, the agent can’t access the file. No separate AI-specific access setup is required.

- Tenant-level data boundaries: All processing happens within Microsoft’s secure infrastructure, backed by Azure OpenAI. There’s no public ChatGPT endpoint — data stays within your private tenant.

- Responsible AI principles: Microsoft applies its Responsible AI Standard, ensuring AI is deployed safely, fairly, and transparently.

For European customers, Copilot Studio also aligns with the EU Data Boundary commitment, keeping data processing inside the EU wherever possible. Similar residency protections apply globally under Microsoft’s Advanced Data Residency and Multi-Geo capabilities.

Governance in practice

Deploying Copilot Studio securely takes more than a few clicks. Successful rollouts include:

- Data readiness

Many organisations have poor data hygiene — redundant, outdated, or wrongly shared files. Before enabling Copilot, clean up data stores, remove unnecessary content, and confirm access rights. If Copilot can access it, so can employees with matching permissions.

- Data loss prevention

Use Microsoft’s built-in Data Loss Prevention (DLP) capabilities to stop Copilot from accessing or exposing sensitive information. At the Power Platform level (which covers Copilot Studio), DLP policies focus on controlling connectors; for example, blocking connectors that could pull data from unapproved systems or send it outside your governance boundary.

Beyond Copilot Studio, Microsoft Purview DLP offers a broader safety net. It protects sensitive data across Microsoft 365 apps (Word, Excel, PowerPoint), SharePoint, Teams, OneDrive, Windows endpoints, and even some non-Microsoft cloud services.

By combining connector-level controls with Purview’s sensitivity labels and classification policies, you can flag high-risk content such as medical records or salary data, and prevent it from being surfaced by Copilot.

Configure DLP policies to prevent Copilot from retrieving information from sensitive or confidential files, such as medical records or salary data. Use sensitivity labels to flag and restrict high-risk content.

- Connector control

Remove unnecessary connectors to prevent Copilot from accessing data outside your governance framework.

- Clear internal guidance

Publish company-specific usage rules. Load the documentation into Copilot Studio so employees can query an internal knowledge base before asking questions that rely on external or unverified sources.

- Escalation paths

For complex or sensitive questions, integrate Copilot Studio with ticketing systems or expert routing — for example, automatically opening an omnichannel support case.

Building trust in AI adoption

Security isn’t the only barrier to AI adoption — trust plays a critical role too. Employees, legal teams, and executives need confidence that AI tools won’t create new liabilities. Microsoft has taken several steps to address these concerns:

- Copyright protection: Under its Copilot Copyright Commitment, Microsoft stands behind customers if AI-generated output triggers third-party copyright claims, covering legal defence and costs.

- Compliance leadership: Microsoft has been proactive in aligning AI services with global and regional legislation, from the EU Data Boundary to sector-specific regulations.

- Responsible use by design: The company’s Responsible AI principles ensure AI is developed and deployed with fairness, accountability, transparency, and privacy as core requirements.

For IT leaders, this means adopting Copilot Studio isn’t just a technical exercise but an opportunity to establish governance, legal assurance, and ethical use standards that will support AI adoption for years to come.

Why AI governance for Copilot Studio can’t wait

Microsoft has been proactive on AI legislation and compliance since the start, with explicit commitments on data protection and even AI copyright indemnification. But no matter how robust the vendor’s safeguards, governance still depends on your internal policies and configuration.

The earlier you establish these guardrails, the sooner you can empower teams to innovate without risk — and avoid retrofitting controls after a security incident.

Need help? Book your free readiness audit to see exactly where your governance gaps are and how to fix them before rollout so you can deploy Copilot Studio with confidence.

Useful resources

TL;DR:

Legacy ERP workflows rarely map cleanly into Dynamics 365. Familiar screens, custom approvals, patched permissions often break because Dynamics 365 enforces a modern, role-based model with standardised navigation and workflow logic. This isn’t a bug but the natural result of moving from heavily customised systems to a scalable platform. To avoid adoption failures, don’t replicate the old system screen by screen. Focus on what users actually need, rebuild tasks with native Dynamics 365 tools, redesign security around duties and roles, and test real scenarios continuously. Migration is your chance to modernise, and if you align workflows with Dynamics 365 patterns, you’ll gain a system that’s more secure, more scalable, and better suited to how your business works today.

Why your ERP workflows fail after migration

You’ve migrated the data. You’ve configured the system. But now your field teams are stuck.

They can’t find the screens they used to use. The approvals they rely on don’t trigger. And the reports they count on? Missing key data.

This isn’t a technical glitch. It’s a user experience mismatch.

When companies move from legacy ERP systems to Dynamics 365, the shift isn’t just in the database. The entire way users interact with the system changes, from navigation paths and field layouts to permission models and process logic.

If you don’t redesign around those changes, your ERP transformation will quietly break the very workflows it was meant to improve.

This is the fourth part of our series on ERP migration. Read more about

- Our playbook for a successful ERP migration

- Reasons your ERP migration takes longer than expected, and

- How to speed up ERP migration without compromising on quality

Dynamics 365 isn’t your old ERP — and that’s the point

Legacy systems were often customised beyond recognition. Buttons moved, fields were renamed, permissions manually patched. As a result, they were highly tailored systems that made sense to a small group of users but were nearly impossible to scale or maintain.

Dynamics 365 takes a different approach. It offers a modern, role-based experience that works consistently across modules. But that means some of your old shortcuts, forms, and approvals won’t work the same way — or at all.

This can catch users off guard. Especially if no one has taken the time to explain why the system changed, or to align the new setup with how teams actually work.

Where the breakage usually happens

Navigation

Field engineers can’t find the work order screens they’re used to. Finance can’t locate posting profiles. Procurement doesn’t know how to filter vendor invoices. Familiar menus are gone and replaced with new logic that’s more powerful, but also less intuitive if you’re coming from a heavily customised legacy system.

Permissions

Old ERPs often relied on manual access grants or flat permission sets. In Dynamics 365, role-based security is more structured, and less forgiving. If roles aren’t mapped correctly, users lose access to critical features or gain access they shouldn’t have.

Workflow logic

Your old approval chains or posting setups may not map cleanly to Dynamics 365. For example, workflow conditions that relied on specific field values may behave differently now, or require reconfiguration using the new workflow engine.

Day-to-day tasks

Sometimes it’s as simple as a missing field or a renamed dropdown. But that can be enough to stall operations if users aren’t involved in the migration process and given time to learn the new flow.

How to avoid workflow disruption in your Dynamics 365 migration

Don’t try to copy the old system screen for screen. That’s a common mistake and it leads to frustration. Instead, map legacy processes to Dynamics 365 patterns. Focus on what the user is trying to achieve, not what the old screen looked like.

Start with your core user tasks.

- What does a finance user need to do each day?

- What about warehouse staff?

- Field service engineers?

Identify their critical workflows, then rebuild them in Dynamics 365 using native forms, views, and automation.

Review and rebuild your security model. Role-based security is at the heart of Dynamics 365. You’ll need to define duties, privileges, and roles properly, not just copy old access tables. Test for least privilege and ensure segregation of duties.

Test user scenarios in every sprint. Don’t wait for UAT to catch usability issues. Include key personas in each migration cycle. Run scenario-based smoke tests and regression checks. Use test environments that mirror production, and validate real-life tasks end to end.

Provide context and support. Users aren’t just learning a new tool — they’re changing habits built over years. Train them with use-case-driven sessions, not generic walkthroughs. Show them how the new process works and why it changed.

Migration is your chance to modernise. Don’t waste it

Your ERP isn’t just a backend system. It’s where users spend hours every day getting work done. If it doesn’t work the way they expect, adoption will suffer, and so will your transformation goals.

✅ Legacy workflows may not map to D365 one-to-one

✅ Navigation, permissions, and logic will differ

✅ Redesign processes around D365 patterns, not legacy layouts

✅ Review role-based permissions carefully

✅ Involve users early and test real scenarios every sprint

Done right, this isn’t just damage control. It’s an opportunity to rebuild your processes in a way that’s more secure, more scalable, and actually aligned with how your business works today.

Just because your old system lets you do something doesn’t mean its how your new system should work — and now’s the perfect time to make that shift. Consult our experts before you begin your migration and set the project up for success.

TL;DR

ERP projects don’t collapse because of the software — they collapse when messy, inconsistent, or incomplete data is pushed into the new system. Bad data leads to broken processes, frustrated users, unreliable reports, and compliance risks. The only way to avoid this is to treat data quality as a business priority, not an IT afterthought. That means profiling and cleansing records early, aligning them to Dynamics 365’s logic instead of legacy quirks, validating and reconciling every migration cycle, and involving business owners throughout. Clean data isn’t a nice-to-have — it’s the foundation of a successful ERP rollout.

Your ERP is only as good as your data

You can have the best ERP system in the world. But if your data is incomplete, inconsistent, or poorly structured, the result is always the same: broken processes, frustrated users, and decisions based on guesswork.

We’ve seen Dynamics 365 projects delayed or derailed not because of the software — but because teams underestimated how messy their data really was.

So let’s talk about what happens when you migrate bad data, and what you should do instead.

This is the third part of our series on ERP migration. Read more about

- Our playbook for a successful ERP migration

- How to speed up ERP migration without compromising on quality, and

- How to migrate to D365 from legacy technology

What bad data looks like in an ERP system

A new ERP system is supposed to streamline your operations, not create more chaos. But if your master data isn’t accurate, it starts causing problems right away.

Broken workflows

- Sales orders that fail because customer IDs don’t match

- Invoices that bounce because VAT settings are missing

- Stock that disappears because unit of measure conversions weren’t mapped

User frustration

- Employees waste hours trying to fix errors manually

- They lose confidence in the system

- Adoption suffers. Shadow systems start creeping in

Poor reporting

- Your shiny new dashboards don’t reflect reality.

- Finance teams can’t close the books.

- Operations can’t trust inventory figures.

- Leadership can’t make informed decisions.

Compliance risks

- Missing fields

- Outdated codes

- Unauthorised access to sensitive records

- Inconsistent naming conventions that make data hard to track

All of these can lead to audit issues or worse.

And yet, despite the stakes, data quality is often treated as a “technical detail” — something IT will sort out later. That’s exactly how costly mistakes happen.

Why data quality needs to be a business priority — not an IT afterthought

Data quality isn’t just about spreadsheets. It’s about trust, efficiency, and the ability to run your business.

Good ERP data supports business processes. It aligns with how teams actually work. And it evolves with your organisation — if you put the right structures in place.

Too many ERP projects approach migration as a technical handover: extract, transform, load. But that mindset ignores a crucial reality — only business users know what the data should say.

That’s why successful migrations start with cross-functional ownership, not just technical execution.

What good data management looks like before ERP migration

Identify and fix bad data early

Run profiling tools or even basic Excel checks to spot issues:

- duplicates,

- incomplete fields,

- outdated reference codes.

Don’t wait for them to break test environments.

Fit your data to the new system — not the old one

Legacy ERPs allowed all sorts of workarounds. Dynamics 365 has stricter logic.

You’ll need to normalise values, align with global address book structures, and reformat transactional data to fit new entities.

Validate everything

Don’t assume data has moved just because the ETL pipeline ran.

Build checks into each stage:

- record counts

- value audits

- referential integrity checks.

Set up test environments that reflect real-world usage.

Involve business users early

IT can move data. But only process owners know if it's right.

Loop in finance, sales, procurement, inventory — whoever owns the source and target data. Get their input before migration begins.

Plan for reconciliation

Post-load, run audits to confirm data landed correctly. Compare source and target figures.

Validate key reports. Fix gaps before go-live, not after.

Data quality isn’t a nice-to-have. It’s a critical success factor

ERP migration is the perfect time to raise the bar. But to do that, you have to treat data quality as a core deliverable — not a side task.

That means budgeting time and effort for:

- Cleansing legacy records

- Enriching and standardising key fields

- Testing and validating multiple migration cycles

- Assigning ownership to named individuals

- Reviewing outcomes collaboratively with business teams

When done right, good data management saves time, cost, and credibility — not just during the migration, but long after go-live.

Clean data makes or breaks your ERP project

If you migrate messy, unvalidated data into Dynamics 365, the problems don’t go away — they multiply. This looks like:

- Broken processes

- Unhappy users

- Useless reports

- Extra costs

- Compliance headaches

But with the right approach, data becomes a strength — not a liability.

- Profile and clean your data early

- Fit it to Dynamics 365, not legacy quirks

- Validate, reconcile, and document

- Involve business users throughout

- Treat data quality as a strategic priority

A clean start in Dynamics 365 begins with clean data. And that’s something worth investing in.

The good news is that these potential mistakes can be avoided early on with a detailed migration plan. Consult with our experts to create one for your team.